Sommaire

In today's fast-evolving world of technology, one concept that's gaining traction is Retrieval-Augmented Generation (RAG). RAG leverages a powerful combination of retrieval and generative models to enhance text responses with real-time data.

This innovative system uses advanced LLM techniques alongside external search mechanisms to access a comprehensive database of documents and content, ensuring that every query is met with a relevant, accurate answer.

In this article, we will explore the future of RAG, its various applications in the enterprise space, and its potential impacts on industries that rely on language models and augmented knowledge. By delving deeper into the process behind retrieval, embedding sources, and prompt generation, we can better understand how RAG transforms customer interactions and internal systems.

What is RAG in the AI Context?

RAG stands for Retrieval-Augmented Generation, a novel approach in artificial intelligence that combines the strengths of retrieval-based models with generative LLM text production.

Essentially, RAG leverages a rich database of source documents and embeddings to augment the knowledge base from which it draws responses. This means that the system retrieves specific and context-based data snippets and then uses them to generate semantic and fine-tuned responses that are both creative and factually accurate.

The Components of RAG

At its heart, RAG is built on two key components:

Thus, RAG operates through a straightforward, yet powerful, two-step mechanism: the Retrieval Phase where the system scans a comprehensive database to gather relevant data snippets based on the user's query and the Generation Phase where the system crafts a coherent, detailed answer that integrates the information easily after having used the retrieved snippets.

This dual-process architecture allows RAG to balance creativity with factual accuracy, making it particularly valuable in fields where precision is crucial.

The Evolution of RAG Technology

RAG is a relatively new entrant in the AI domain, but its roots can be traced back to earlier models that combined retrieval and generation.

Over time, the sophistication of both retrieval systems and generative models has increased dramatically.

Advances in training and embedding techniques have transitioned RAG from a theoretical concept to a practical tool that can handle massive amounts of data and deliver accurate responses in real time.

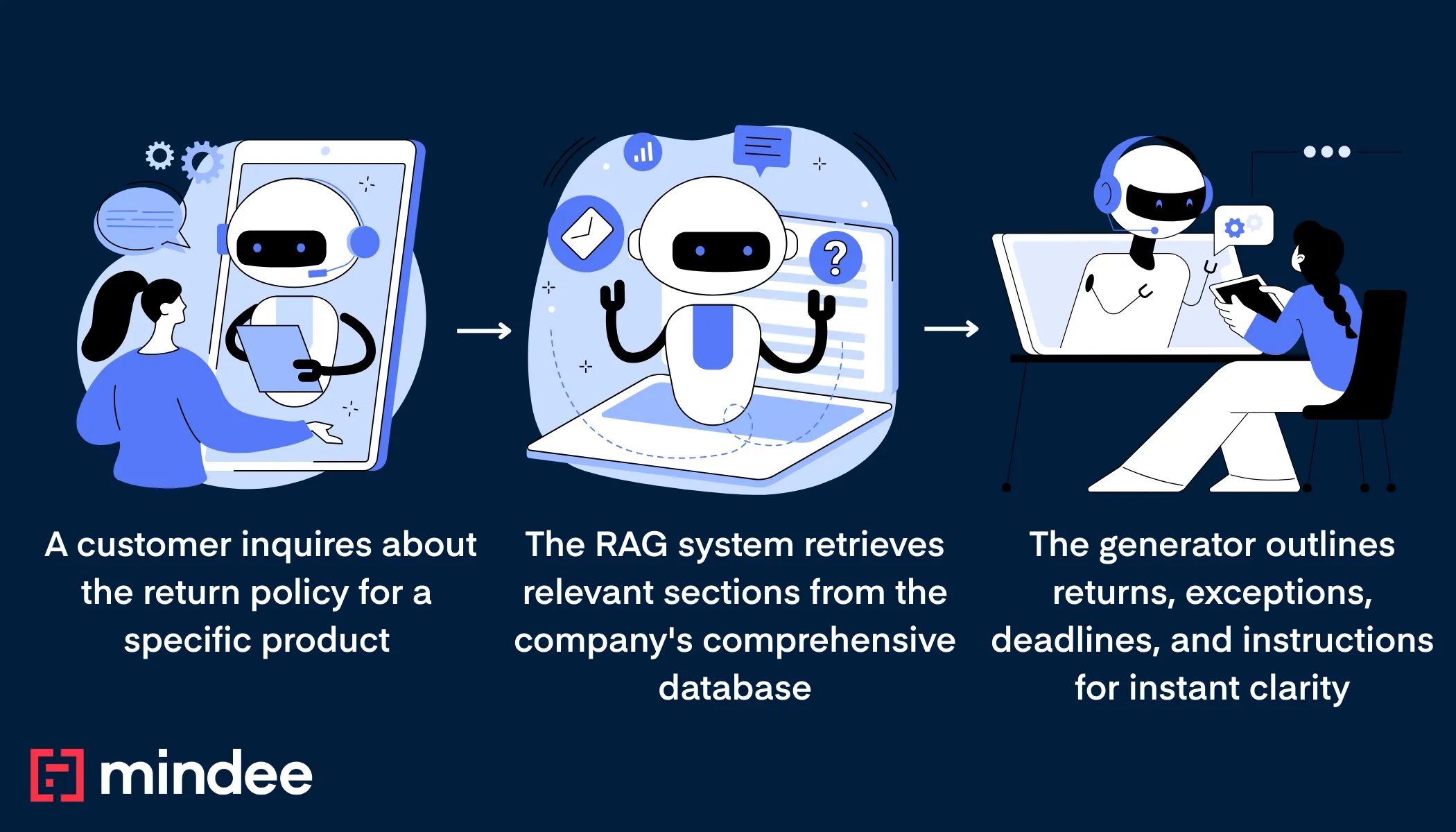

Retrieval-Augmented Generation Example

Imagine a customer service chatbot for an online retailer that uses RAG.

RAG vs. LLM: Understanding the Differences

While both RAG and large language models (LLMs) are used in AI applications, they serve different purposes. LLM modelslike GPT-4.o focus on generating human-like text based on input data.

In contrast, RAG integrates retrieval mechanisms to provide more informed and context-aware responses. This makes RAG particularly useful in scenarios where access to specific, up-to-date information is crucial.

Below is a comparison table that highlights the key differences between the two:

The Unique Strengths of LLMs

Large Language Models excel at creating text that mimics human conversation. Their ability to generate fluent and coherent language makes them ideal for applications where the primary goal is to produce text that feels natural and engaging.

However, LLMs sometimes struggle with accuracy and context, especially when dealing with specialized or rapidly changing information. This is where RAG's retrieval capabilities provide a distinct advantage.

RAG's Competitive Edge in Information Retrieval

RAG's primary strength lies in its ability to tap into a vast reservoir of data to retrieve relevant information.

This capability allows RAG to craft responses that are not only contextually appropriate but also factually accurate.

In environments where precision is critical—such as legal, medical, or technical fields—RAG offers a competitive edge over traditional LLMs by ensuring that the information provided is both current and correct.

Comparing Use Cases

While both RAG and LLMs have overlapping applications, they are often best suited for different tasks:

- ✍️ LLMs are commonly used in creative writing, storytelling, and conversational agents where personality and tone are key

- ⏱️ RAG, however, thrives in situations where up-to-the-minute information is required, such as real-time data analysis or customer service interactions.

Understanding these distinctions helps businesses and developers choose the right model for their specific needs.

Applications of RAG Technology

RAG technology is versatile and can be applied across various industries. Here are some of its key applications:

👩💻 Enhancing Customer Support

In customer service, RAG can significantly improve response times and accuracy.

By accessing a database of product information, previous customer interactions, and troubleshooting guides, RAG-powered chatbots can provide quick and precise solutions to customer queries.

This capability not only enhances customer satisfaction but also reduces the operational costs associated with human-led support teams.

🩻 Advancements in Healthcare

In the healthcare sector, RAG models can assist medical professionals by retrieving and summarizing the latest research studies, patient records, and treatment guidelines.

This aids in making informed decisions and improving patient care. Moreover, by streamlining access to critical information, RAG can help reduce diagnostic errors and optimize treatment plans, contributing to better patient outcomes.

🎓 Educational Tools

For educational purposes, RAG can be used to develop intelligent tutoring systems that provide personalized learning experiences.

By retrieving relevant educational content and generating tailored explanations, RAG can enhance the learning process for students.

These systems can adapt to individual learning paces and styles, offering a more engaging and effective educational journey for learners of all ages.

🏛️ Legal and Regulatory Compliance

In the legal domain, RAG can be employed to quickly navigate through extensive legal documents and regulatory guidelines.

By retrieving pertinent case law or regulatory information, RAG systems can assist legal professionals in crafting compelling arguments or ensuring compliance. This not only saves time but also increases the accuracy of legal proceedings and advice.

🔬 Research and Development

RAG is becoming an invaluable tool in research and development across various scientific fields.

By facilitating the retrieval of relevant literature and data, RAG systems can help researchers identify trends, synthesize information, and generate innovative solutions. This accelerates the pace of discovery and enhances the potential for groundbreaking advancements.

The Future of RAG

As the field of AI continues to advance, the future of RAG looks promising. Here are some potential developments to watch for:

Increased Integration with GenAI

Generative AI, or GenAI, is rapidly evolving, and its integration with RAG could lead to more sophisticated AI systems.

By combining GenAI's creativity with RAG's information retrieval capabilities, future AI models could offer even more nuanced and insightful interactions. This synergy could result in AI systems that not only inform but also entertain and engage users on a deeper level.

Applications RAG améliorées

La portée des applications RAG est susceptible de s'étendre à mesure que de plus en plus d'industries reconnaîtront son potentiel. De la finance au divertissement, RAG pourrait révolutionnez la façon dont les entreprises interagissent avec les données et fournissent des services.

En affinant continuellement ses algorithmes et en élargissant ses sources de données, la technologie RAG pourrait devenir partie intégrante des stratégies de transformation numérique dans divers secteurs.

RAG et PNL : une puissante combinaison

Traitement du langage naturel (NLP) joue un rôle crucial dans les fonctionnalités de RAG. À mesure que les technologies NLP s'améliorent, les modèles RAG deviendront encore plus aptes à comprendre et à générer du texte semblable à celui d'un humain, ce qui en fera des outils précieux dans diverses applications centrées sur la communication.

Cette avancée améliorera la capacité de RAG à comprendre les requêtes complexes et générez des réponses qui sont non seulement précises mais également linguistiquement sophistiquées.

Considérations éthiques et gouvernance de l'IA

À mesure que la technologie RAG progresse, les considérations éthiques et la gouvernance deviendront de plus en plus importantes. Garantir la confidentialité des données, éviter les biais et maintenir la transparence seront essentiels pour renforcer la confiance dans les applications RAG.

Établissement des cadres éthiques solides et les politiques de gouvernance seront essentielles pour orienter le développement et le déploiement responsables des systèmes RAG à l'avenir.

Systèmes d'IA collaboratifs

L'avenir pourrait voir l'émergence de systèmes d'IA collaboratifs qui intègrent le RAG à d'autres technologies d'IA pour créer des solutions complètes. En tirant parti des points forts des différents modèles d'IA, ces systèmes pourraient offrir de meilleurs services permettant de relever des défis complexes de manière fluide.

Cette collaboration pourrait ouvrir de nouvelles frontières en matière d'innovation et d'efficacité fondées sur l'IA.

En résumé, l'avenir de RAG est prometteur, avec un vaste potentiel pour les applications d'entreprise et des interactions améliorées avec les clients.

Alors que nous continuons à explorer et à développer cette technologie passionnante, les possibilités d'améliorer la compréhension sémantique et de fournir des réponses précises sont infinies.

L'avancement continu et l'intégration de RAG dans divers domaines façonneront sans aucun doute l'avenir de comment nous interagissons avec la technologie et les informations linguistiques !

À propos

.svg)

.svg)

.webp)

.webp)

.webp)